Question Generation using Natural Language processing

Auto generate assessments in edtech like MCQs, True/False, Fill-in-the-blanks etc using state-of-the-art NLP techniques

4.20 (240 reviews)

926

students

5.5 hours

content

Mar 2022

last update

$22.99

regular price

What you will learn

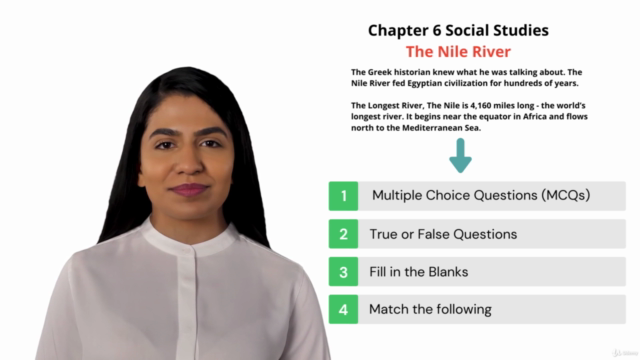

Generate assessments like MCQs, True/False questions etc from any content using state-of-the-art natural language processing techniques.

Apply recent advancements like BERT, OpenAI GPT-2, and T5 transformers to solve real-world problems in edtech.

Use NLP libraries like Spacy, NLTK, AllenNLP, HuggingFace transformers, etc.

Deploy transformer models like T5 to production in a Serverless fashion by ONNX quantization and by dockerizing them using FastAPI.

Use Google Colab environment to run all these algorithms.

Screenshots

Related Topics

3713412

udemy ID

12/18/2020

course created date

2/18/2021

course indexed date

Bot

course submited by