Learn BERT - essential NLP algorithm by Google

Understand and apply Google's game-changing NLP algorithm to real-world tasks. Build 2 NLP applications.

4.31 (1191 reviews)

7,741

students

5.5 hours

content

Jan 2025

last update

$64.99

regular price

What you will learn

Understand the history about BERT and why it changed NLP more than any algorithm in the recent years

Understand how BERT is different from other standard algorithm and is closer to how humans process languages

Use the tokenizing tools provided with BERT to preprocess text data efficiently

Use the BERT layer as a embedding to plug it to your own NLP model

Use BERT as a pre-trained model and then fine tune it to get the most out of it

Explore the Github project from the Google research team to get the tools we need

Get models available on Tensorflow Hub, the platform where you can get already trained models

Clean text data

Create datasets for AI from those data

Use Google Colab and Tensorflow 2.0 for your AI implementations

Create customs layers and models in TF 2.0 for specific NLP tasks

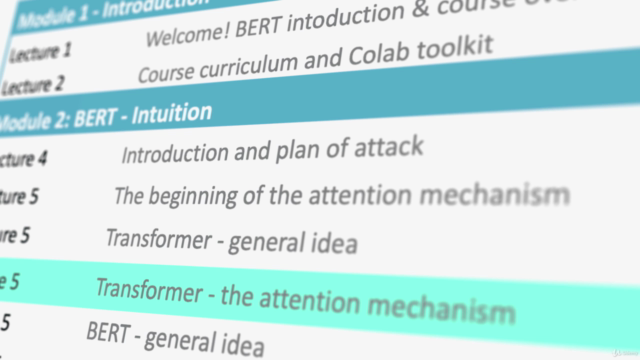

Screenshots

2518074

udemy ID

8/20/2019

course created date

1/7/2020

course indexed date

Bot

course submited by